ACTSET: An Approach to AI Prompting for Language Educators

By Kim Edmunds, Columbia University Language Resource Center

DOI: https://www.doi.org/10.69732/JYQJ5624

The field of language teaching loves an acronym, and I am no exception. When done well, an acronym packages a complex schema into something memorable, meaningful, and actionable – three things that educators always look for when adding to our toolkits. And rarely has there been such a need for complex yet actionable schemata as now, during our ongoing adaptation to Generative AI (GenAI). In this piece, I’d like to share a new acronym: ACTSET, a heuristic for prompt design, refinement, and critical reflection that was developed with language educators in mind.

ACTSET is situated within what scholars have termed prompt literacy: “the ability to effectively formulate, understand, and evaluate prompts to elicit appropriate responses from AI systems” (Gattupalli et al., 2023). To support prompt literacy for a general audience, GenAI companies like OpenAI (2026) and Anthropic (2026) offer broad guidance, emphasizing the importance of clarity, specificity, context, and iteration. These are the basic principles of prompt literacy, upon which any useful adaptation is built. For example, excellent approaches that specifically support educators are Jacobs and Fisher’s (2023) acronym CAST (Criteria, Audience, Specifications, Testing), as well as recommendations from Joyner (2024), which also highlight the importance of “context, depth, and audience” (p. 23) as elements of an iterative process.

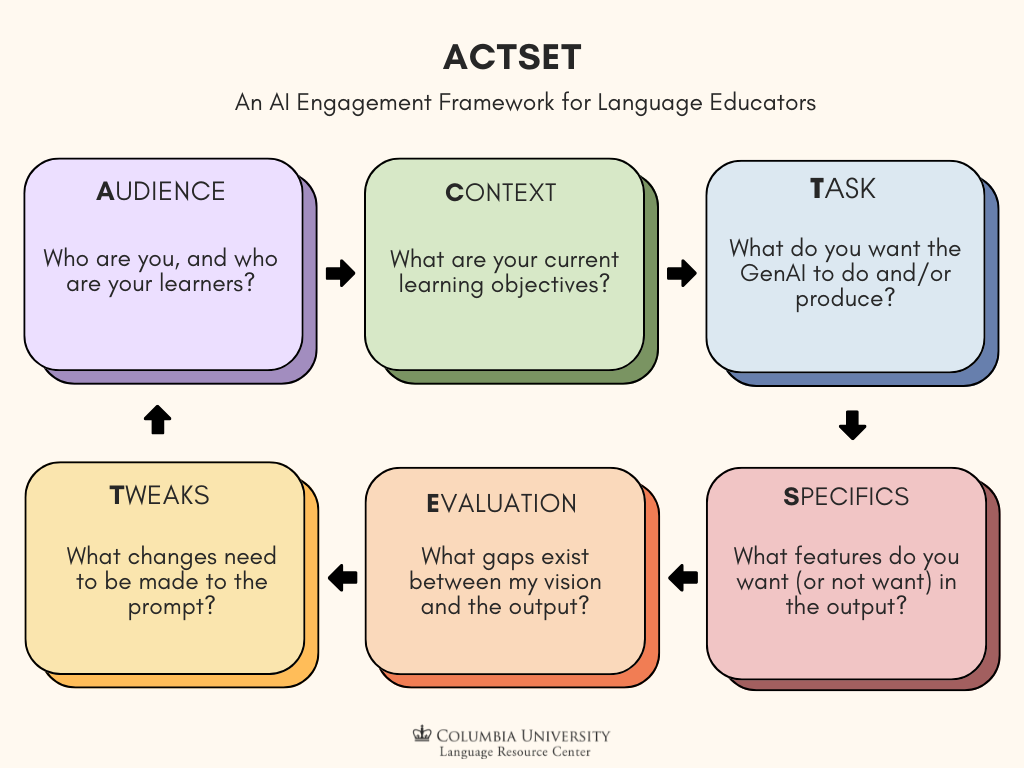

These existing approaches form the basis of ACTSET, which stands for Audience, Context, Task, Specifics, Evaluation, and Tweaks; they also inspired me to drill down further. I believe approaches to prompt literacy can and should be tailored to the user and audience to the greatest extent possible. In other words, language educators will benefit from a tool that meaningfully incorporates language teaching considerations into existing recommendations. In developing ACTSET, I asked myself, “What do these processes look like when used critically by and for a language teacher specifically? What questions should we reflect on along the way?” I developed targeted guiding questions for each stage in ACTSET, to help language educators produce materials that are linguistically valid, pedagogically grounded, and critically evaluated. I hope for ACTSET to be a memorable, meaningful, and actionable way for language instructors to engage with AI, whether they are testing the waters or are already immersed.

The graphic below illustrates the cyclical nature of ACTSET, followed by a more detailed breakdown with the essential guiding questions. The questions help you stay aligned with your own goals, as well as with general principles of good language instruction, such as learner-centeredness, meaningful input, and cultural relevance. If you prefer to see these details formatted as a table, please see Appendix A (or B, which features another example prompt).

Audience

In this step, you establish parameters for the AI tool regarding to whom it is “speaking” and for whom it is engaging in this interaction. The general guiding question is “Who are you, as an instructor, and who are your learners?” Some additional questions for you to reflect on in this stage are:

- What else do you want the AI to “know” about you? (E.g. areas of expertise/interest, amount of teaching experience)

- Who are your learners in the context of their language journey? Consider:

- Approximate proficiency level (GenAI benefits from established benchmarks like CEFR or ACFTL descriptors)

- First language(s)

- Learning styles

- Target language use domains

- General educational backgrounds

- Heritage speaker status

- What else do you want the AI to “know” about them? (Respecting their personal privacy, of course)

Below, in italics, is an example of what the Audience component of a prompt might look like. Note that this is one part of a longer prompt, and will be built upon in the following steps.

I am a college professor who teaches Elementary (CEFR A2/ACFTL Novice Mid-High) Spanish. My learners are in their first year of college and many of them are first generation college students. Many are learning Spanish to connect with their Spanish-speaking relatives; some are also going into healthcare and want to use Spanish in their careers.

Context

You begin to set the scene, providing relevant information on the work you and your learners have done leading up to the moment of this interaction with AI. This is to ensure that the AI tool draws on your actual experience, and not generalizations from its training data about what a class like yours might look like. The guiding question is, “What are your current learning objectives?” Some additional questions for you to reflect on in this stage are:

- What has the class been learning, working on, making?

- What relevant materials can you upload to the chat that will anchor the tool with sample vocabulary, style, dialect, and more?

- What areas of linguistic and/or cultural competence do you know they need more support with?

We just finished a unit on family, including “found families” and “nontraditional” (e.g. non-nuclear, adoptive, etc.) families. Copies of both short articles we read are attached, and here is a link to a video they watched for homework: [] The learners are going to give a culminating presentation with an audience Q&A about their families.

Something worth noting here is that many AI tools are able to retain a “memory” for the details in the Audience and Context steps, a practice some instructors already take advantage of (Li et al., 2025). Having an ongoing, dedicated chat or other digital space for each course you teach could streamline the process (into TSET, perhaps?).

Task

At this stage, you begin to articulate what general form you would like the AI’s output to take. The guiding question is, “What do you want the AI to do and/or produce?” The verb(s) you use will be key here, so some additional questions for reflection are:

- What actions will the AI take? Summarize, suggest, draft, design, incorporate, modify, synthesize, outline…

Please draft a descriptive rubric for evaluating this presentation.

Specifics

Or parameters, guidelines, guardrails, restraints – you know what makes good learning material, and in this stage, you bring that knowledge to bear. The general guiding question is, “What features do you want (or not want) in the output?” Additional questions for reflection are:

- How long/and or detailed should it be?

- What elements should be included, excluded, emphasized or de-emphasized?

Include organization, language use, timing (4-5 min range), and Q&A performance as criteria. There should be 3 scoring bands, with 25 total possible points. Weigh language use the most.

At this point in the process, share the first iteration of your prompt with the AI tool:

I am a college professor who teaches Elementary (CEFR A2/ACFTL Novice Mid-High) Spanish. My learners are in their first year of college and many of them are first generation college students. Many are learning Spanish to connect with their Spanish-speaking relatives; some are also going into healthcare and want to use Spanish in their careers. We just finished a unit on family, including “found families” and “nontraditional” (e.g. non-nuclear, adoptive, etc.) families. Copies of both short articles we read are attached, and here is a link to a video they watched for homework: [] The learners are going to give a culminating presentation with an audience Q&A about their families. Please draft a descriptive rubric for evaluating this presentation. Include organization, language use, timing (4-5 min range), and Q&A performance as criteria. There should be 3 scoring bands, with 25 total possible points. Weigh language use the most.

Evaluation

This stage is the most important. You will not have anticipated everything in your first prompt; now you review the AI’s response to the first round of prompting. The general guiding question is, “What gaps exist between my vision and the output?” Here your expertise and lived experience become invaluable as you review.

- What is or isn’t in the output that I didn’t anticipate?

- Is there anything in the output that could be confusing or misleading to learners?

- Is its use of the target language accurate and meaningful?

- Does the output reflect effective pedagogical practices?

- Does the output show evidence of any kind of bias (linguistic, cultural, etc.)?

- Is there extraneous information that I may be able to remove from the output to streamline learner understanding?

Tweaks

Once you’ve evaluated the first response that AI gives you, and noted critical gaps between that output and what you actually want, you can ask yourself: “After the initial response, what changes need to be made to the prompt?” This may mean starting again at the Audience stage, or perhaps revisiting Context or Specifics, or even Task. An additional question for reflection is:

- What can/should I have the AI address in the next round, and what can/should I address myself?

There are a few different reasons to ask yourself whether you or the AI should implement your tweaks. One is that AI tools will not always be capable of making the changes you request, even once you point them out. If, for example, the output over-represents a certain dialect in its examples, it may lack the necessary data to update them with linguistic diversity in mind. Another possibility, if you teach a less-commonly taught language, is that you may need to supplement its limited stock of grammatical examples and culturally-embedded discourse strategies.

Another reason to contemplate how to make tweaks is the possibility of teachable moments. Instructors of any language might encounter stereotypes in AI-generated content that we have spent our careers trying to dispel. Such content, if we choose to leave it in the output, can present the opportunity to educate our learners about these stereotypes by discussing them in class, which comes with the added benefit of reminding learners about the importance of critical AI literacy (Gattupalli et al., 2023).

Finally, there is the question of expediency. There will be times when it is simply easier to alter the output ourselves, rather than make further requests in subsequent prompts. Perhaps you have found that, for your teaching context, asking the AI to use vocabulary better suited to beginners does not result in helpful changes. Now you don’t bother asking for this, and modify texts yourself. In many cases, our own expertise is the better resource – for reasons of efficiency, but also for other deeply important reasons beyond the scope of this article. That being said, as GenAI technology continues to evolve and scale, its abilities will expand as well. You might consider occasionally circling back to previous prompts to determine whether their performance has improved over time.

Here is how I requested tweaks based on the AI response to my first prompt:

Format the rubric as a table, not a bulleted list. Simplify the language using more common vocabulary (e.g. not words like “comprehensible”) and pose the descriptors as questions in the second person, so learners can use the rubric as a planning guide. Focus on appropriate vocabulary use, not grammatical accuracy.

I piloted a version of ACTSET with a group of Columbia University language lecturers in Fall 2025, and it was well received (in fact, a few suggested I write an article like this). I hope that my fellow language educators and technologists find it useful, and I welcome your thoughts if you decide to try it out. Relatedly, transparency is essential as we integrate AI use into our practice, especially if we expect it of our learners. In addition to indicating when AI was used to develop something, I suggest eliciting feedback from learners to understand their perspectives and refine our ability to use AI tools to meet a wide range of needs.

To conclude, I want to underscore that using ACTSET is iterative by design. As much as we would like for AI to be all about efficiency, the fact is that effective use of AI requires critical evaluation by human experts in order to be of pedagogical benefit. That’s not to say that the process needs to be burdensome and repetitive. Ideally, we’ll begin to feel more confident with practice, improving our ability to produce better prompts sooner, with less reference to guides like the admittedly busy tables below. Having already internalized plenty of higher-order processes in our careers (e.g. syllabus design), we are certainly capable of continuing to do so in the AI era. Indeed, our ability to do this is more important now than ever before. As with any new technology, engaging with AI tools in education requires a critical approach on the part of both learners and instructors (Digital Futures Institute, n.d.; Kohnke & Zou, 2025). With the thoughtful evaluation and adaptation that prompting requires, we ourselves stand to grow as educators – learning to ask new questions that broaden not only our understanding of GenAI, but our own teaching practice.

References

Anthropic. (n.d.). Claude prompting best practices. Retrieved March 16, 2026, from https://platform.claude.com/docs/en/build-with-claude/prompt-engineering/claude-prompting-best-practices

Digital Futures Institute. (n.d.). AI in education guides: Tips and tricks for prompt writing. https://www.tc.columbia.edu/digitalfuturesinstitute/learning–technology/instructional-guides–resources/self-paced-learning-guides/ai-in-education-guides-tips-and-tricks-for-prompt-writing/

Gattupalli, S., Maloy, R. W., & Edwards, S. A. (2023). Prompt literacy: A pivotal educational skill in the age of AI. College of Education Working Papers and Reports Series, 6. https://doi.org/10.7275/3498-wx48

Jacobs, H. H., & Fisher, M. (2023, June 26). Prompt literacy: A key for AI-based learning. ASCD. https://www.ascd.org/el/articles/prompt-literacy-a-key-for-ai-based-learning

Joyner, D. A. (2024). A teacher’s guide to conversational AI: Enhancing assessment, instruction, and curriculum with chatbots. Routledge.

Kohnke, L., & Zou, D. (2025). Artificial intelligence integration in TESOL teacher education: Promoting a critical lens guided by TPACK and SAMR. TESOL Quarterly, 59(S3). https://doi.org/10.1002/tesq.3396

Li, M., Belpoliti, F., Taha, G., & Zhang, M. (2025). World language teachers’ reported practices of integrating ChatGPT into instruction: A positioning analysis. Foreign Language Annals. https://doi.org/10.1111/flan.70041

OpenAI. (n.d.). Prompt engineering: Best practices for ChatGPT. Retrieved March 16, 2026, from https://help.openai.com/en/articles/10032626-prompt-engineering-best-practices-for-chatgpt

Appendix A

| Stage | Guiding Question | Further Reflection | Example |

| Audience | Who are you as an instructor, and who are your learners? | What else do you want the GenAI to “know” about you? (E.g. areas of expertise/interest, experience level in teaching)Who are your learners? What else do you want the GenAI to “know” about them? | I am a college professor who teaches Elementary (/CEFR A2/ACFTL Novice Mid-High) Spanish. My learners are in their first year of college and many of them are first generation college learners.Many are learning Spanish to connect with their Spanish-speaking relatives; some are also going into healthcare and want to use Spanish in their careers. |

| Context | What are your current learning objectives? | What has the class been learning, working on, making? What areas of linguistic and/or cultural competence do you know they need more support with? | We just finished a unit on family, including “found families” and “nontraditional” (e.g. non-nuclear, adoptive, etc.) families. Copies of both short articles we read are attached, and here is a link to a video they watched for homework: [] The learners are going to give a culminating presentation with an audience Q&A about their families. |

| Task | What do you want the GenAI to do and/or produce? | What actions will it take? Summarize, suggest, draft, design, incorporate, modify, synthesize, outline… | Please draft a descriptive rubric for evaluating this presentation. |

| Specifics | What features do you want (or not want) in the output? | How long/and or detailed should it be?What elements should be emphasized or de-emphasized? | Include organization, language use, timing (4-5 min range), and Q&A performance as criteria. There should be 3 scoring bands, with 25 total possible points. Weigh language use the most. |

| Evaluation | What gaps exist between my vision and the output? |

|

|

| Tweaks | After the initial response, what changes need to be made to the next prompt? | What can/should I have the GenAI address in the next round, and what can/should I address myself? | Format the rubric as a table, not a bulleted list. Simplify the language using more common vocabulary (e.g. not “comprehensible”) and pose the descriptors as questions in the second person, so learners can use the rubric as a planning guide. Focus on vocabulary use, not grammatical accuracy. |

Appendix B

| Stage | Guiding Question | Further Reflection | Example |

| Audience | Who are you and your learners? | What else do you want the GenAI to “know” about you? (E.g. areas of expertise/interest, experience level in teaching)Who are your learners? What else do you want the GenAI to “know” about them? | I am a college professor who teaches intermediate ESL. The learners are at an intermediate- high level on the ACFTL proficiency scale. Many are international learners and business majors. |

| Context | What are your current learning objectives? | What has the class been learning, working on, making? What areas of linguistic and/or cultural competence do you know they need more support with? | We are working on improving reading skills for comprehension and discussion. |

| Task | What do you want the GenAI to do and/or produce? | What actions will it take? Summarize, suggest, draft, design, incorporate, modify, synthesize, outline… | Modify the text of this article to fit their reading level while still conveying key information. |

| Specifics | What features do you want (or not want) in the output? | How long/and or detailed should it be?What elements should be emphasized or de-emphasized? | Limit the text to 300 words and keep a few potentially new vocabulary words (with parenthetical definitions). Then generate 3 discussion questions about the topic for use in class. |

| Evaluation | What gaps exist between my vision and the output? |

|

|

| Tweaks | After the initial response, what changes need to be made to the next prompt? | What can/should I have the GenAI address in the next round, and what can/should I address myself? | Re-incorporate the narrative hook in the introduction to stay true to the style of the original. *Note: The way it chose which words to define seemed random. I ended up taking over this part of the modification.

|

AI disclosure: Generative artificial intelligence was used to double-check the accuracy of the citation formatting.